My writing automation isn’t quite finished yet, but with all the buzz around Claude Code lately, I felt like I had to check it out. I decided to dust off an idea from my mental backlog of personal projects: making AIs play the board game Diplomacy against each other. A quick YouTube search shows plenty of people have already done this, so it’s nothing new. But it’s one thing to watch others do it, and another thing entirely to try it yourself. It was one of those “this would be fun to build someday” ideas that I’d mentally outlined and then shelved, but now seemed like the perfect use case.

The feature of Claude Code I was most excited to try was its remote control capability. I had actually tried to set up a remote development environment with OpenClaw before, and while it wasn’t impossible, I eventually concluded it wasn’t very convenient and gave up. But if Claude Code supported it natively, that was a different story. The setup instructions looked simple enough: open a remote control session on the host machine, then launch the Claude app on the remote machine and find the session in the Claude Code tab. It was almost absurdly simple, and it just worked.

Determined not to touch a single line of code myself, I started by creating an ADR (Architecture Decision Record) and a spec document. Even though it was a single session, I cycled through different agent personas—a Solution Architect, a Tech Lead, and a Software Engineer—to draft the documents. It felt like I was leading a meeting with a team of three. The process went something like this: “Okay, you draft this document.” Then, “What’s your take on what they wrote?” “It’s mostly good, but this and that need improvement.” “Hey, they want you to fix this. Go back and do it again.” This back-and-forth to create the documents took about four hours, and that wasn’t four straight hours of work. It included all the idle time while I was off doing other things and coming back to check on the progress.

Next, I had them start the remote implementation. It took about four tries to get it right. The first two attempts failed on the host side with some kind of environment variable error. On the remote end, the connection just dropped, and I only saw the error message when I finally checked the host. I’m not sure if it was a bug in Claude or something else. For the third attempt, I had the host session running but couldn’t connect from the remote machine for a few days. When I finally tried to connect, it failed. Looking at the host, I saw it had terminated the session after a day of inactivity. I think that’s a reasonable security feature.

The fourth attempt was the charm. There was one key difference from the first two tries. Initially, I had asked the AI to create an implementation plan, confirmed its six-step plan, and then told it to proceed on its own. In the final, successful attempt, I instructed it to implement the plan step-by-step. I’d wait for a report that step 1 was complete before telling it to start step 2. Did this make a difference? Or was it something else? I’m not sure.

Anyway, with the initial implementation done, it was time to verify it worked, which I should have to do locally. The UI was specced to run only on a local machine, so I couldn’t test it remotely. My original intention was actually to build a remote-viewable UI to keep the entire process remote. However, as I discussed the ADR and specs with the agents, we shifted direction toward a local UI. Building a remote UI would have required additional specs for authentication and data exchange, not to mention a small but non-zero cost. The goal was to experiment with Claude on a project, not to achieve a fully remote setup, so this felt like a reasonable compromise.

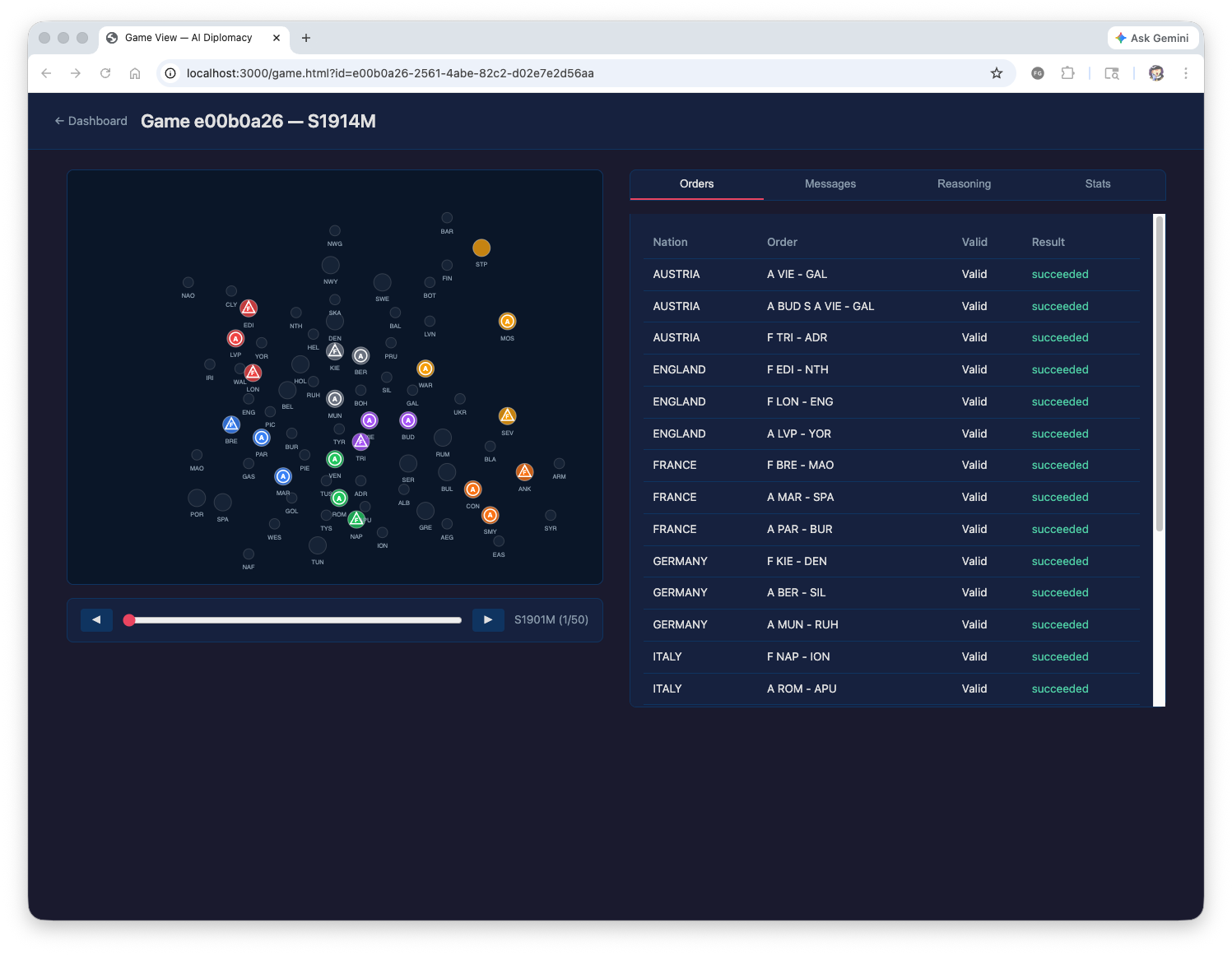

Running it locally revealed a few minor issues that needed fixing, but it basically worked. The problems were pretty trivial:

- There was a `run-dashboard.sh` file for launching the dashboard, but no `run-simulation.sh` for the game simulation itself. (The simulation logic was all there, just no entry point.)

- I had connected the LLM using the Claude CLI, but it was using the wrong options and failing to make calls.

- The AI was supposed to be informed of the game rules, but the actual prompt for the rules was empty.

There were some other UI-related issues, but they didn’t affect execution, so I put them aside for later. With that, I kicked off the simulation for real, feeling like “this is it!”… only for the game to end in 1901. Okay, something was definitely not right. After investigating, I found three main problems:

- The Claude CLI was often returning an empty string.

- It was consuming way too many tokens. (It completely burned through my 5-hour allowance on the Max plan.)

- A stalemate was being triggered far too quickly.

The third problem was a clear design and implementation flaw. There was a rule to end the game in a stalemate if no territories had changed hands for a certain period. It had implemented this based on the *phase* count instead of the *year* count. No wonder the stalemate was happening so fast. After fixing that, I moved on to optimizing token usage. I consulted Claude, which suggested a few improvements that I accepted and had it implement. I also asked to add a retry logic to handle cases where a valid response wasn’t returned.

Time to run it again!

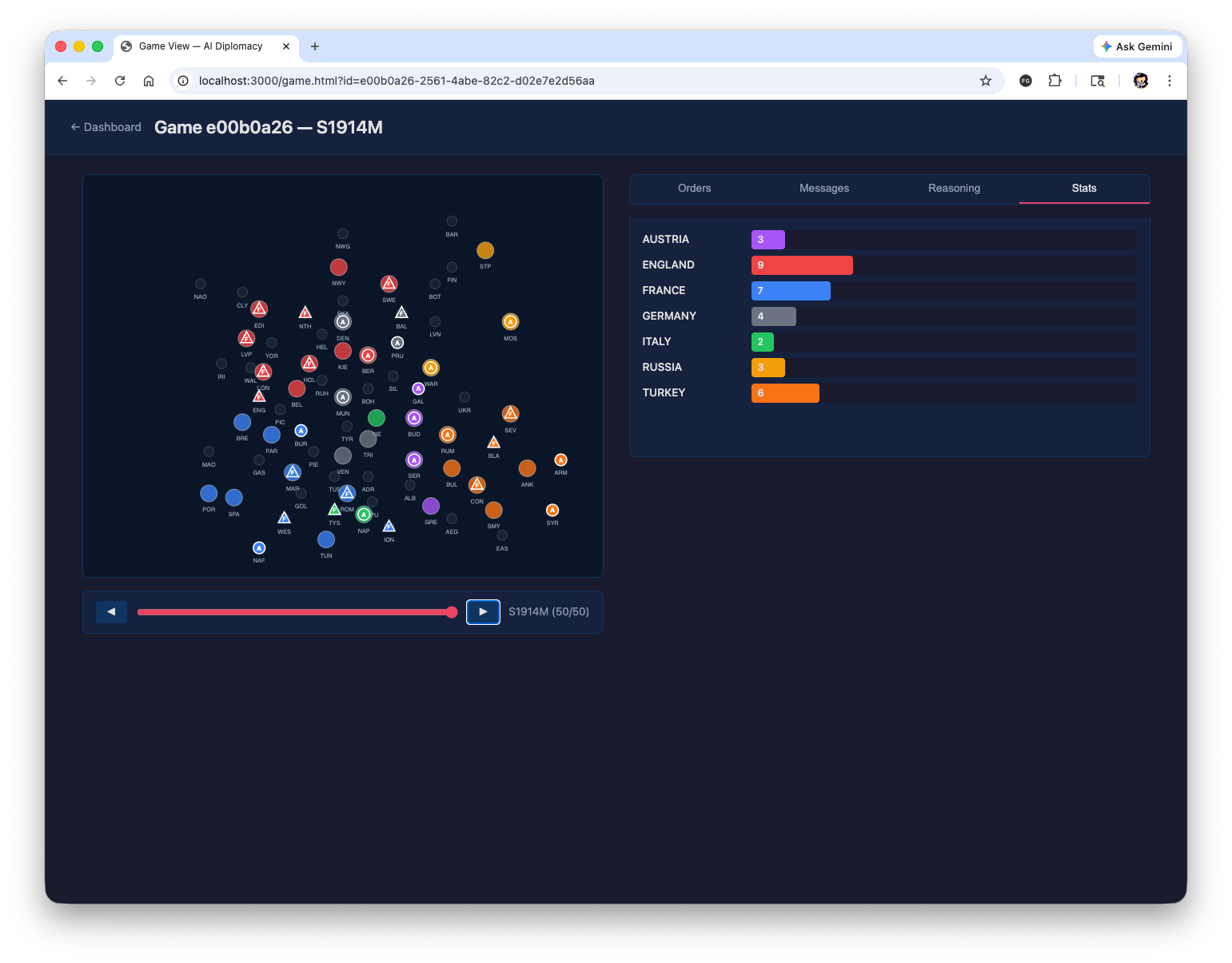

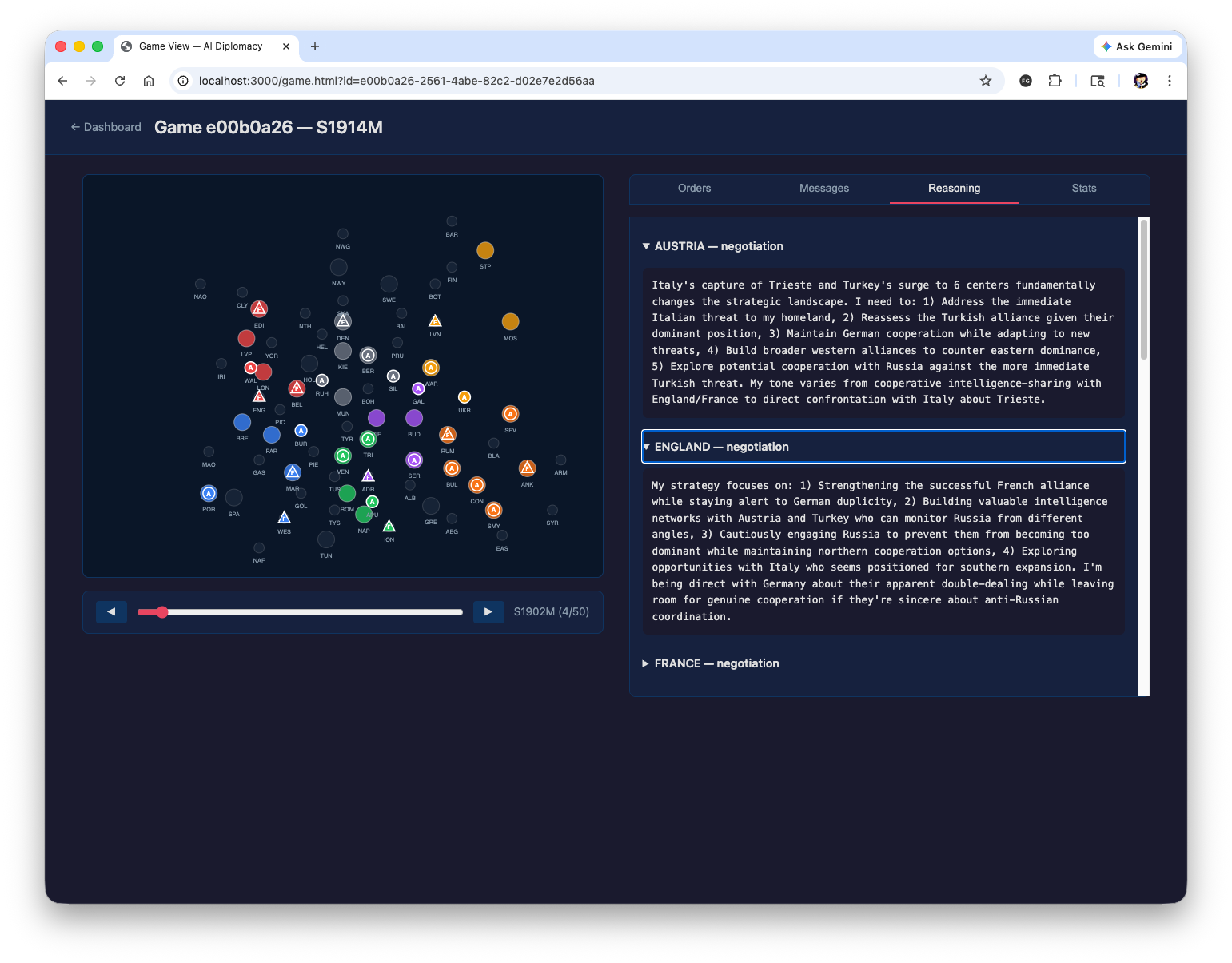

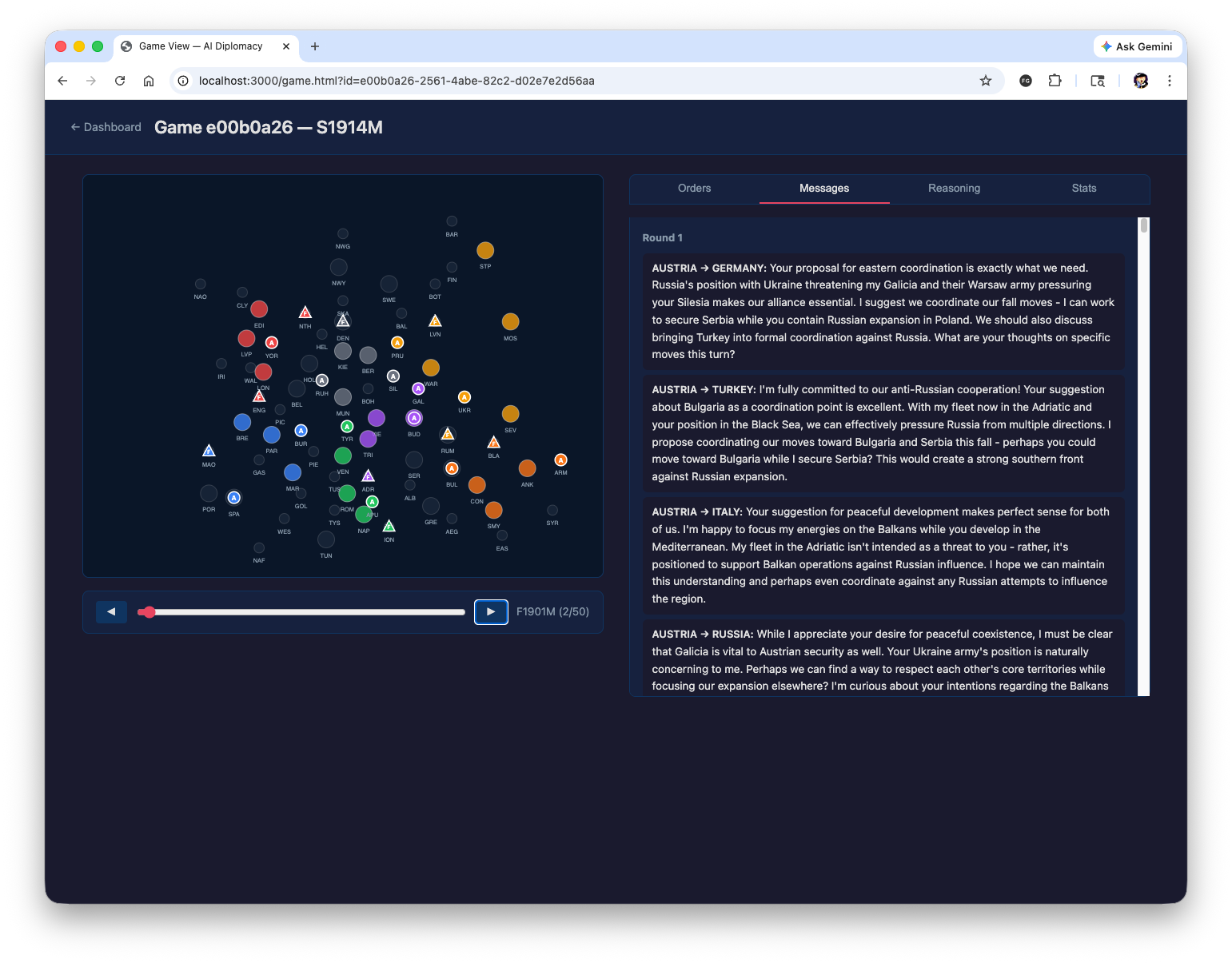

The empty string issue persisted, but thanks to the retry logic, I was able to get a valid response most of the time. In some cases, it failed even after five retries, but there wasn’t much I could do about that. Switching to the API would probably be a more robust solution, but that would cost money. Token usage was also noticeably lower than in the first version. Still, it did manage to hit the 5-hour limit once more. But thanks to the revised stalemate condition, the game didn’t end. There was just a brief period where all the countries were twiddling their thumbs. The game took about 16 hours to complete. No single country managed to capture the 18 supply centers needed for a solo victory; the game ended in 1915 by hitting the maximum turn limit. For what it’s worth, Great Britain won.

My main goal here was to see how far I could get without ever opening a code editor, and I’d say that was a complete success. While I did manually run some long-running commands like the game simulation outside of Claude for practical reasons, everything else—git commits, pushes, editing config files, backing up files—was handled within the Claude session. There were times I needed to read the ADR and spec documents, and I just did that directly on GitHub. In terms of functionality, I didn’t feel like anything was lacking. It really felt like I was just verbally delegating tasks to a team member.

As for whether this improves development efficiency, my answer is yes and no. It was definitely a huge help during the design and implementation phases. That part was incredibly fast. The pure time it took to get to a first runnable version was about six hours; I suspect it would have taken me several days to do it myself. What was particularly convenient was evaluating external packages. The project uses a `diplomacy` package for parsing orders, and Claude did a thorough job of reviewing its features, supported functions, and Python compatibility. That task alone would have eaten up a few hours of my time.

On the other hand, where it didn’t feel much different from doing it myself was in diagnosing and fixing bugs. Especially for problems where the root cause is external to the code, like an integration issue, you still have to go through the process of adding logs and checking the output, just like a human would. It’s convenient, but I wouldn’t call it more efficient. Maybe there’s a way to overcome this that I’m just not aware of due to my limited experience with AI.

Now that I’ve confirmed it works, it’s time for further improvements. I had Claude jot down notes on things that needed fixing as I monitored the progress. Some things, like the unimplemented map and the serial LLM calls, were intentional choices for the first version. But now that it’s functional, those areas need improvement. The negotiation messages are also overly verbose with a lot of fluff, so trimming those down would likely save on token usage. Since I’ve started, I might as well dig a little deeper.